Patrik Polatsek, Miroslav Laco, Å imon Dekrét, Wanda Benesova, Martina Baránková, Bronislava Strnádelová, Jana Koróniová, Mária GablÃková

Abstract.

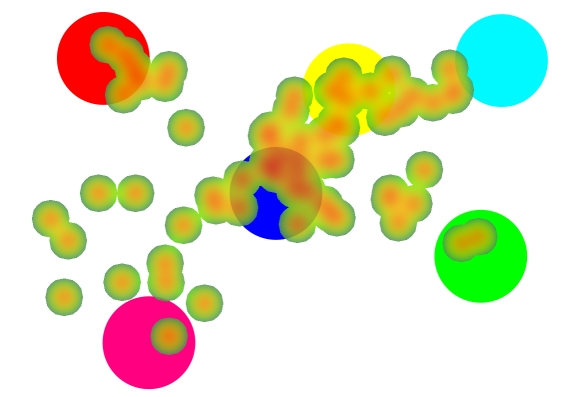

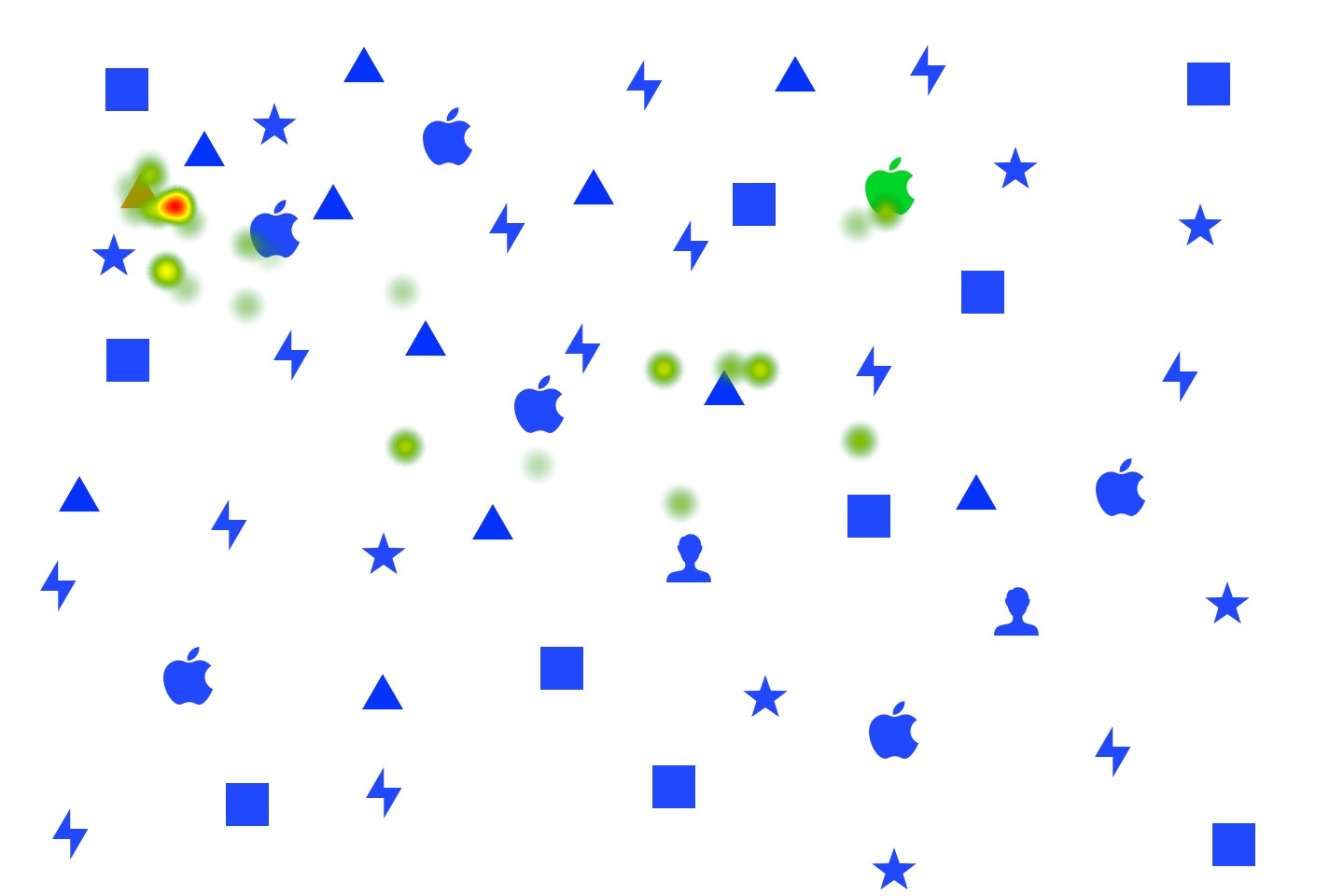

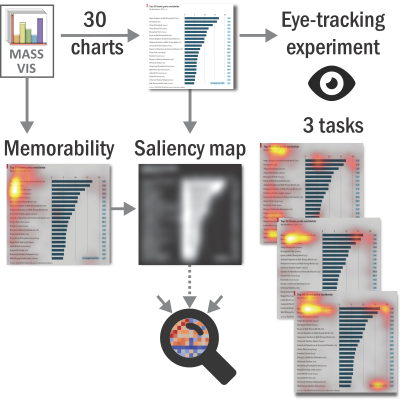

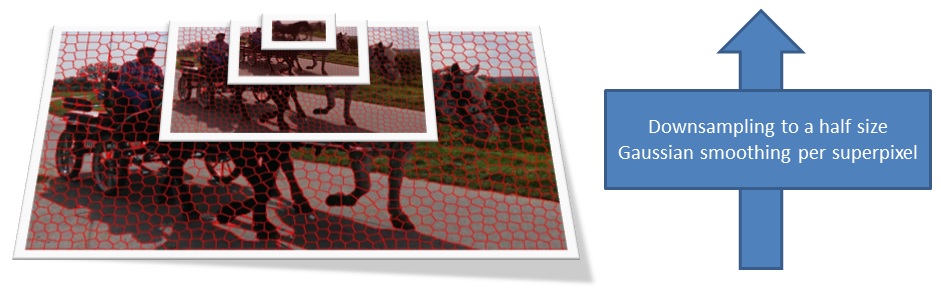

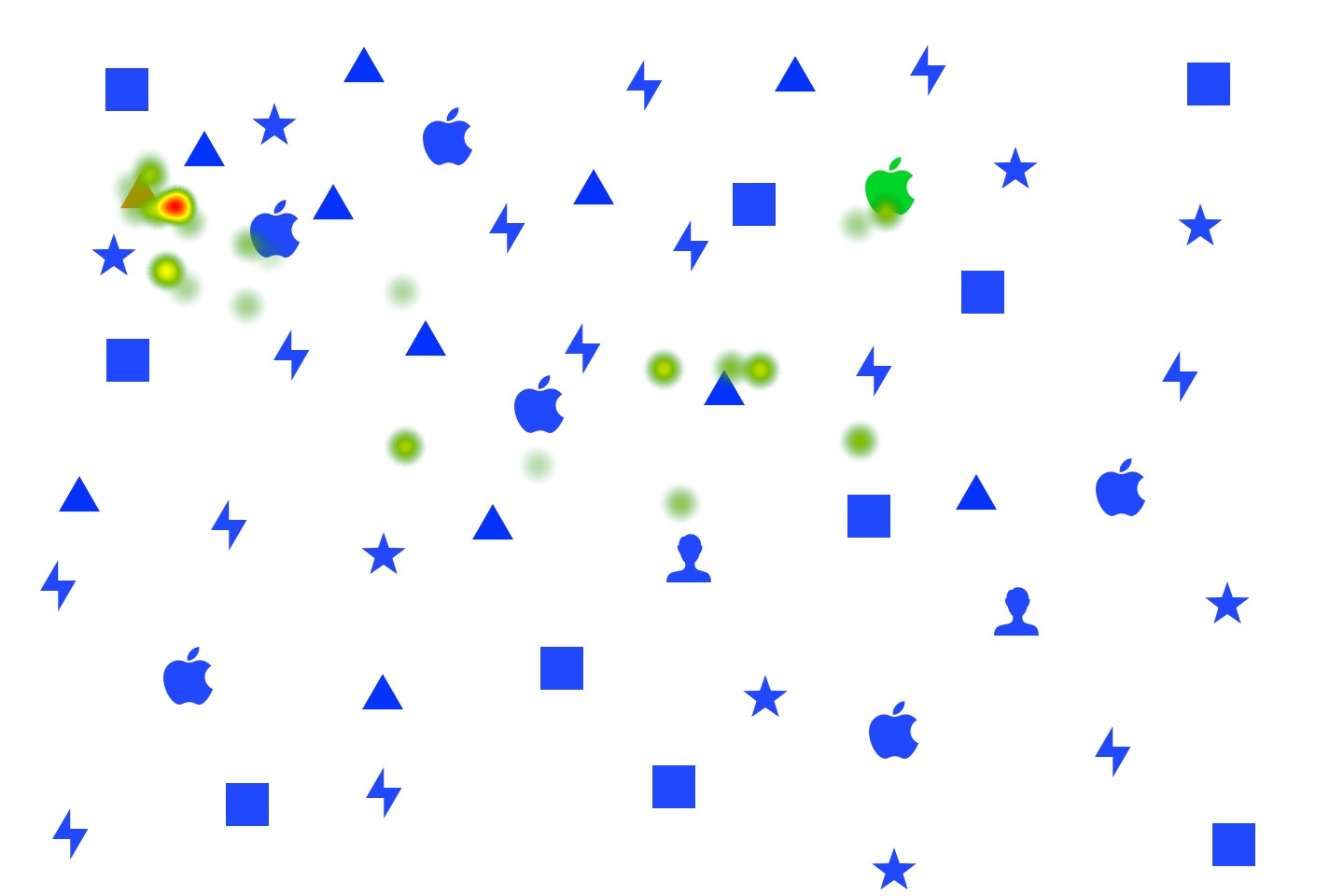

While psychological studies have confirmed a connection between emotional stimuli and visual attention, there is a lack of evidence, how much influence individual’s mood has on visual information processing of emotionally neutral stimuli. In contrast to prior studies, we explored if bottom-up low-level saliency could be affected by positive mood. We therefore induced positive or neutral emotions in 10 subjects using autobiographical memories during free-viewing, memorizing the image content and three visual search tasks. We explored differences in human gaze behavior between both emotions and relate their fixations with bottom-up saliency predicted by a traditional computational model. We observed that positive emotions produce a stronger saliency effect only during free exploration of valence-neutral stimuli. However, the opposite effect was observed during task-based analysis. We also found that tasks could be solved less efficiently when experiencing a positive mood and therefore, we suggest that it rather distracts users from a task.

download: saliency-emotions

Please cite this paper if you use the dataset:

Polatsek, P., Laco, M., Dekrét, Å ., Benesova, W., Baránková, M., Strnádelová, B., Koróniová, J., & GablÃková, M. (2019)

Effects of individual’s emotions on saliency and visual search

Ing. Patrik Polatsek – Dissertation thesis

Ing. Patrik Polatsek – Dissertation thesis