Pavol Zbell

Introduction

In our work we focus on basics of motion analysis and object tracking. We compare MeanShift (non-parametric, finds an object on a back projection image) versus CamShift (continuously adaptive mean shift, finds an object center, size, and orientation) algorithms and effectively utilize them to perform simple object tracking. In case these algorithms fail to track the desired object or the object travels out of window scope, we try to find another object to track. To achieve this, we use a background subtractor based on a Gaussian Mixture Background / Foreground Segmentation Algorithm to identify the next possible object to track. There are two suitable implementations of this algorithm in OpenCV – BackgroundSubtractorMOG and BackgroundSubtractorMOG2. We also compare performance of both these implementations.

Used functions:Â calcBackProject, calcHist, CamShift, cvtColor, inRange, meanShift, moments, normalize

Solution

- Initialize tracking window:

- Set tracking window near frame center

- Track object utilizing MeanShift / CamShift

- Calculate HSV histogram of region of interest (ROI) and track

int dims = 1; int channels[] = {0}; int hist_size[] = {180}; float hranges[] = {0, 180}; const float *ranges[] = {hranges}; roi = frame(track_window); cvtColor(roi, roi_hsv, cv::COLOR_BGR2HSV); // clamp > H: 0 - 180, S: 60 - 255, V: 32 - 255 inRange(roi_hsv, Scalar(0.0, 60.0, 32.0), Scalar(180.0, 255.0, 255.0), mask); calcHist (&roi_hsv, 1, channels, mask, roi_hist, dims, hist_size, ranges); normalize(roi_hist, roi_hist, 0, 255, NORM_MINMAX); ... Mat hsv, dst; cvtColor(frame, hsv, cv::COLOR_BGR2HSV); calcBackProject(&hsv, 1, channels, roi_hist, dst, ranges, 1); clamp_rect(track_window, bounds); print_rect("track-window", track_window); Mat result = frame.clone(); if (use_camshift) { RotatedRect rect = CamShift(dst, track_window, term_criteria); draw_rotated_rect(result, rect, Scalar(0, 0, 255)); } else { meanShift(dst, track_window, term_criteria); draw_rect(result, track_window, Scalar(0, 0, 255)); } - Lost tracked object?

- In other words, is the centeroid of MOG mask out of tracking window?

bool contains; if (use_camshift) { contains = rect.boundingRect().contains(center); } else { contains = center.inside(track_window); } - When lost, reinitialize tracking window:

- Set tracking window to centeroid of MOG mask

- Go back to 2. and repeat

mog->operator()(frame, mask); center = compute_centroid(mask); track_window = RotatedRect(Point2f(center.x, center.y), Size2f(100, 50), 0).boundingRect();

Samples

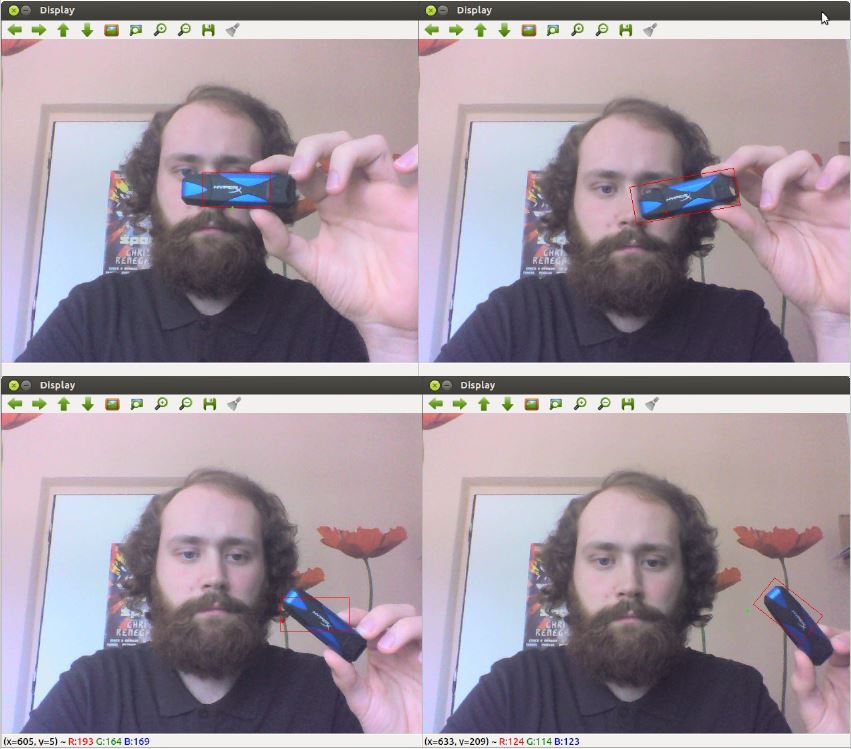

As seen on Fig. 1, MeanShift (left) operates with fixed size tracking windows which can not be rotated. On the contrary, CamShift (right) utilizes the full potential of dynamic size rotated rectangles. Working with CamShift yielded significantly better tracking results in general. On the other hand we recommend to use MeanShift when the object is in constant distance from the camera and moves without rotation (or is represented by a circle), in such case MeanShift performs faster than CamShift and produces sufficient results without any rotation or size change noise.

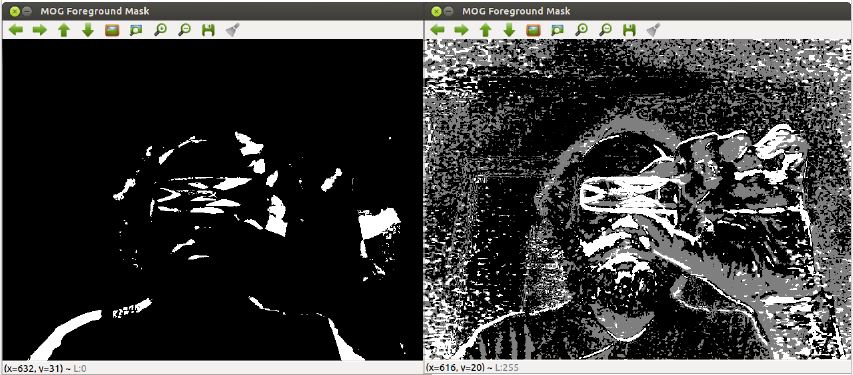

Comparison of BackgroundSubtractorMOG and BackgroundSubtractorMOG2 is depicted on Fig. 2. MOG approach is simpler than MOG2 as it considers only binary masks whereas MOG2 operates on a full gray scale masks. Experiments shown that in our specific case MOG performed better as it yielded less information noise than MOG2. MOG2 will probably produce better results than MOG when utilized more effectively than in out initial approach (simple centeroid from mask extraction).

Summary

In this project explored the possibilities of simple object tracking via OpenCV APIs utilizing various algorithms such as MeanShift and CamShift, Background Extractor MOG and MOG2, which we also compared. Our solution performs relatively well, but we can certainly improve it by fine tuning histogram calculation, MOG, and other parameters. Other improvements can be done in MOG usage, as now the objects are only recognized by finding MOG mask centeroids. This also calls to better tracking window initialization process.