Ing. Peter Drahoš, PhD.

Dissertation

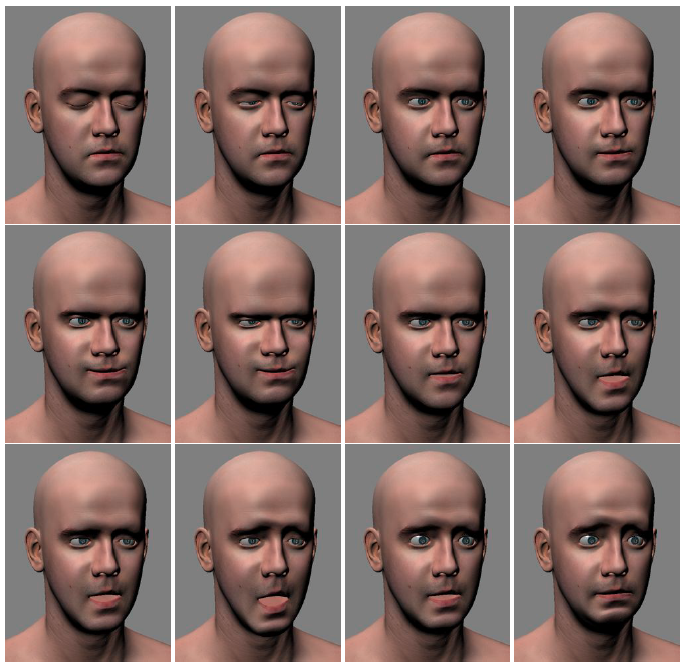

Abstract. In the recent years the average performance of computers increased significantly partly due to the ubiquitous availability of graphics hardware. Photorealistic rendering of human faces is no longer restricted to offline rendering and use in movies. Even portable machines and to some degree high-end mobile devices offer enough performance to synthetize photorealistic facial animation in real time. However, the unavailability of flexible and reusable animation systems for use in third party applications still remains an issue. Our work presents a straightforward approach to photorealistic facial animation synthesis based on dynamic manipulation of displacement maps. We propose an integrated system that combines various animation sources such as blend-shapes, virtual muscles and point influence maps with modern visualization and skin simulation techniques. Inspired by systems that synthetize facial expression from images we designed and implemented an animation system that uses image based composition of displacement maps without the need to process the facial geometry. This improves overall performance and makes implementation of detail scalability trivial. For evaluation we have implemented a reusable animation library that was used in a simple application that visualizes speech.

PDF:Â Summary of the Disseration Thesis:Â Photo-Realistic Head Model for Real-Time Animation